Deploying vision language models

One tool GM is developing to tackle these nuanced scenarios is the use of Vision Language Action (VLA) models. Starting with a standard Vision Language Model, which leverages internet-scale knowledge to make sense of images, GM engineers use specialized decoding heads to fine-tune for distinct driving-related tasks. The resulting VLA can make sense of vehicle trajectories and detect 3D objects on top of its general image-recognition capabilities.

These tuned models enable a vehicle to recognize that a police officer’s hand gesture overrides a red traffic light or to identify what a “loading zone” at a busy airport terminal might look like.

These models can also generate reasoning traces that help engineers and safety operators understand why a maneuver occurred — an important tool for debugging, validation, and trust.

- Advertisement -

Testing hazardous scenarios in high-fidelity simulations

The trouble is: driving requires split-second reaction times so any excess latency poses an especially critical problem. To solve this, GM is developing a “Dual Frequency VLA.” This large-scale model runs at a lower frequency to make high-level semantic decisions (“Is that object in the road a branch or a cinder block?”), while a smaller, highly efficient model handles the immediate, high-frequency spatial control (steering and braking).

This hybrid approach allows the vehicle to benefit from deep semantic reasoning without sacrificing the split-second reaction times required for safe driving.

But dealing with an edge case safely requires that the model not only understand what it is looking at but also understand how to sensibly drive through the challenge it’s identified. For that, there is no substitute for experience.

Which is why, each day, we run millions of high-fidelity closed loop simulations, equivalent to tens of thousands of human driving days, compressed into hours of simulation. We can replay actual events, modify real-world data to create new virtual scenarios, or design new ones entirely from scratch. This allows us to regularly test the system against hazardous scenarios that would be nearly impossible to encounter safely in the real world.

- Advertisement -

Synthetic data for the hardest cases

Where do these simulated scenarios come from? GM engineers employ a whole host of AI technologies to produce novel training data that can model extreme situations while remaining grounded in reality.

GM’s “Seed-to-Seed Translation” research, for instance, leverages diffusion models to transform existing real-world data, allowing a researcher to turn a clear-day recording into a rainy or foggy night while perfectly preserving the scene’s geometry. The result? A “domain change”—clear becomes rainy, but everything else remains the same.

- Advertisement -

High-fidelity simulation isn’t always the best tool for every learning task. Photorealistic rendering is essential for training perception systems to recognize objects in varied conditions. But when the goal is teaching decision-making and tactical planning—when to merge, or how to navigate an intersection—the computationally expensive details matter less than spatial relationships and traffic dynamics. AI systems may need billions or even trillions of lightweight examples to support reinforcement learning, where models learn the rules of sensible driving through rapid trial and error rather than relying on imitation alone.

To this end, General Motors has developed a proprietary, multi-agent reinforcement learning simulator, GM Gym, to serve as a closed-loop simulation environment that can both simulate high-fidelity sensor data, and model thousands of drivers per second in an abstract environment known as “Boxworld.”

By focusing on essentials like spatial positioning, velocity and rules of the road while stripping away details like puddles and potholes, Boxworld creates a high-speed training environment for reinforcement learning models at incredible speeds, operating 50,000 times faster than real-time and simulating 1,000 km of driving per second of GPU time. It’s a method that allows us to not just imitate humans, but to develop driving models that have verifiable objective outcomes, like safety and progress.

From abstract policy to real-world driving

Of course, the route from your home to your office does not run through Boxworld. It passes through a world of asphalt, shadows, and weather. So, to bring that conceptual expertise into the real world, GM is one of the first to employ a technique called “On Policy Distillation,” where engineers run their simulator in both modes simultaneously: the abstract, high-speed Boxworld and the high-fidelity sensor mode.

Here, the reinforcement learning model—which has practiced countless abstract miles to develop a perfect “policy,” or driving strategy—acts as a teacher. It guides its “student,” the model that will eventually live in the car. This transfer of wisdom is incredibly efficient; just 30 minutes of distillation can capture the equivalent of 12 hours of raw reinforcement learning, allowing the real-world model to rapidly inherit the safety instincts its cousin painstakingly honed in simulation.

Designing failures before they happen

Simulation isn’t just about training the model to drive well, though; it’s also about trying to make it fail. To rigorously stress-test the system, GM utilizes a differentiable pipeline called SHIFT3D. Instead of just recreating the world, SHIFT3D actively modifies it to create “adversarial” objects designed to trick the perception system. The pipeline takes a standard object, like a sedan, and subtly morphs its shape and pose until it becomes a “challenging”, fun-house version that is harder for the AI to detect. Optimizing these failure modes is what allows engineers to preemptively discover safety risks before they ever appear on the road. Iteratively retraining the model on these generated “hard” objects has been shown to reduce near-miss collisions by over 30%, closing the safety gap on edge cases that might otherwise be missed.

Even with advanced simulation and adversarial testing, a truly robust system must know its own limits. To enable safety in the face of the unknown, GM researchers add a specialized “Epistemic uncertainty head” to their models. This architectural addition allows the AI to distinguish between standard noise and genuine confusion. When the model encounters a scenario it doesn’t understand—a true “long tail” event—it signals high epistemic uncertainty. This acts as a principled proxy for data mining, automatically flagging the most confusing and high-value examples for engineers to analyze and add to the training set.

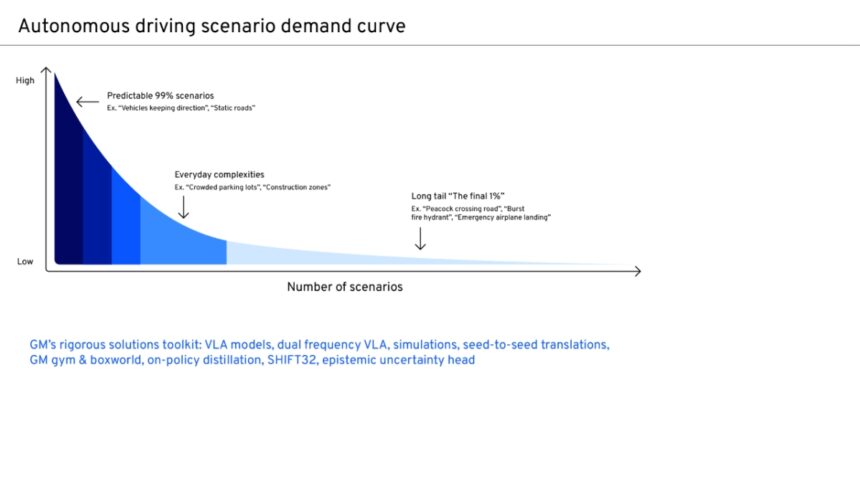

This rigorous, multi-faceted approach—from “Boxworld” strategy to adversarial stress-testing—is General Motors’ proposed framework for solving the final 1% of autonomy. And while it serves as the foundation for future development, it also surfaces new research challenges that engineers must address.

How do we balance the essentially unlimited data from Reinforcement Learning with the finite but richer data we get from real-world driving? How close can we get to full, human-like driving by writing down a reward function? Can we go beyond domain change to generate completely new scenarios with novel objects?

Solving the long tail at scale

Working toward solving the long tail of autonomy is not about a single model or technique. It requires an ecosystem — one that combines high-fidelity simulation with abstract learning environments, reinforcement learning with imitation, and semantic reasoning with split-second control.

This approach does more than improve performance on average cases. It is designed to surface the rare, ambiguous, and difficult scenarios that determine whether autonomy is truly ready to operate without human supervision.

There are still open research questions. How human-like can a driving policy become when optimized through reward functions? How do we best combine unlimited simulated experience with the richer priors embedded in real human driving? And how far can generative world models take us in creating meaningful, safety-critical edge cases?

Answering these questions is central to the future of autonomous driving. At GM, we are building the tools, infrastructure, and research culture needed to address them — not at small scale, but at the scale required for real vehicles, real customers, and real roads.

###

Training driving AI at 50,000× real time: GM’s approach to scalable autonomy

2026-03-17 15:30:00

media.gm.com

https://media.gm.com/content/media/us/en/gm/home.detail.html/content/Pages/news/us/en/engineering/2026/mar/0310-snyder.html

#Training #driving #real #time #GMs #approach #scalable #autonomy